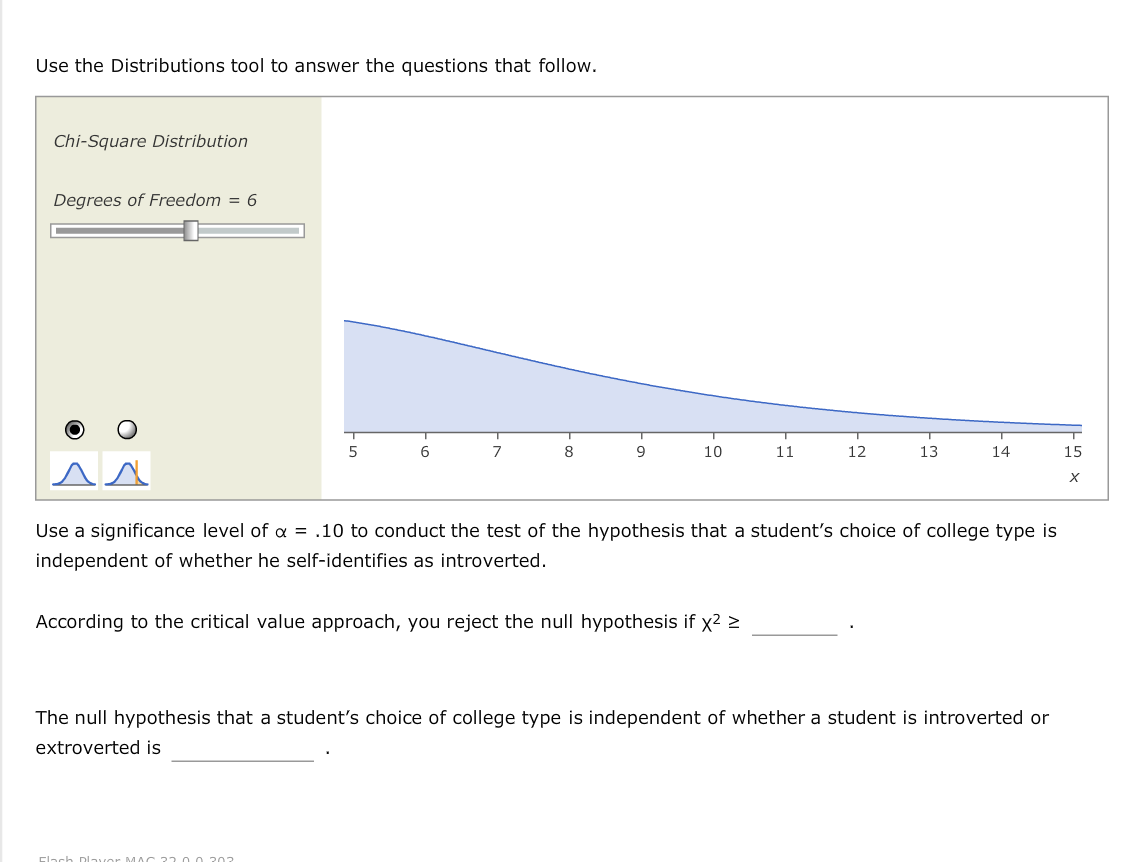

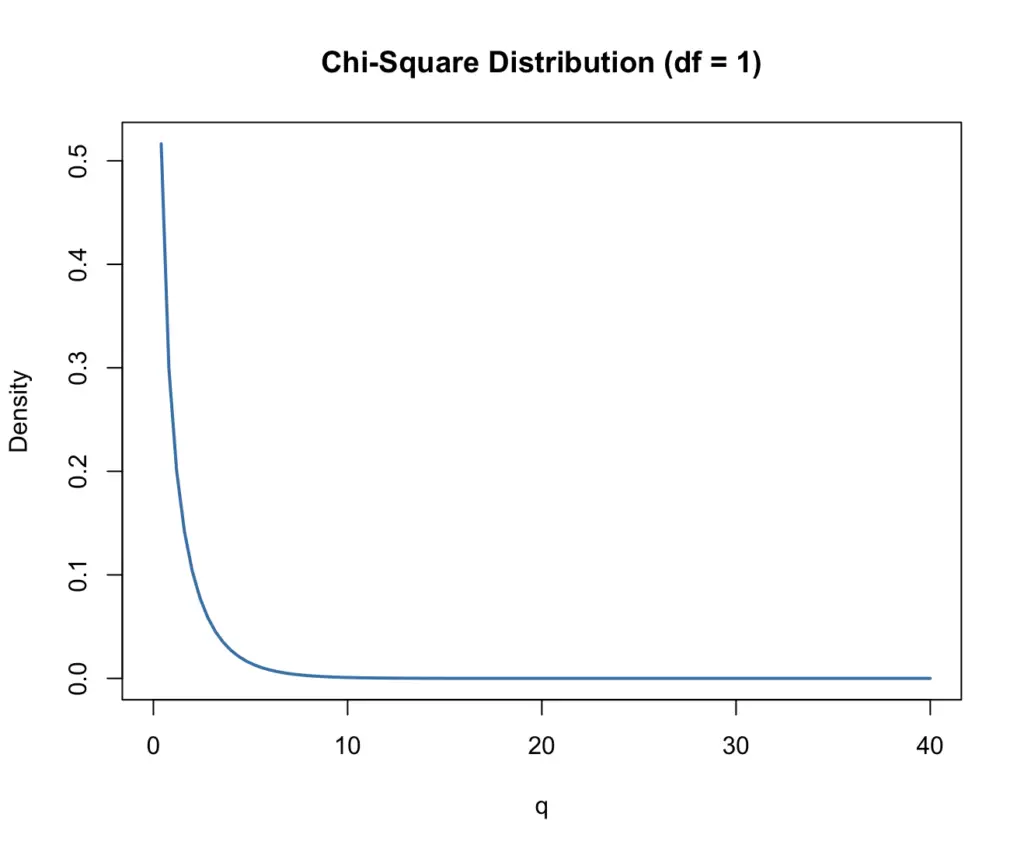

We can use the Chi-Square distribution to construct confidence intervals for the standard deviation of normally distributed data. In fact, the mean of the Chi-Square distribution is equal to the degrees of freedom. Since we are adding up the squared values of k draws from a random normal distribution, the bulk of our values will now cluster around higher values of q (οr χ). Plot made available by user Geek3 under on Wikipedia Note: Here we are using the greek letter χ, which looks confusingly similar to x. In the following plot, you see how the pdf of the Chi-Square distribution changes based on the degrees of freedom. A professional statistician might disagree with it. Please note that this is by no means a rigorous definition. The more variables you add, the more variability you introduce, and thus the more degrees of freedom you have. But as the name implies, you can think of it as the number of variables that can vary. There isn’t a clear-cut definition of degrees of freedom. There is a different chi-square curve for each df. + (Zk)2 The curve is nonsymmetrical and skewed to the right. The number of independent random variables that go into the Chi-Square distribution is known as the degrees of freedom (df). The random variable for a chi-square distribution with k degrees of freedom is the sum of k independent, squared standard normal variables. Q_k = X_1^2 + X_2^2 +.+X_k^2 What are Degrees of Freedom? Thus, you can get to the simplest form of the Chi-Square distribution from a standard normal random variable X by simply squaring X. In a nutshell, the Chi-Square distribution models the distribution of the sum of squares of several independent standard normal random variables. In the context of confidence intervals, we can measure the difference between a population standard deviation and a sample standard deviation using the Chi-Square distribution. This measurement is quantified using degrees of freedom. The Chi-Square distribution is commonly used to measure how well an observed distribution fits a theoretical one. If you want to know how to perform chi-square testing for independence or goodness of fit, check out this post.įor those interested, the last section discusses the relationship between the chi-square and the gamma distribution. learn how to construct Chi-Square confidence intervals The mean of the chi square distribution is the degree of freedom and the standard devi- ation is twice the degrees of freedom.discuss the concept of degrees of freedom.In the R package the pearson.test function in package nortest offers both ($k-m-1$ is the default, but you can get the other bound with a change of a default argument). On the other hand, the distribution function will lie between that of a $\chi^2_$ (where here $m$ doesn't include the total count), so you can at least get bounds on the p-value alternatively you could use simulation to get a p-value. you calculate mean and variance of a supposedly normal sample, then split it into bins for testing for normality) then you don't have a $\chi^2$ distribution at all. If you estimate parameters from ungrouped data (e.g. However - and this is a pretty big caveat, which quite a few books get wrong - those formulas actually only apply when the parameters are estimated from the grouped data. If you estimate one parameter, you'd subtract 2, and so on. Proof: A chi-square-distributed random variable with k k degrees of freedom is defined as the sum of k k squared standard normal random variables. If both parameters are specified, you'd only subtract 1. Then, the probability density function of Y Y is. So for example, if you estimate both parameters of the normal, you'd normally subtract 3 d.f. Just count how many parameters you estimate, then add 1 when you use the total count. So all you need to do now is figure out how many parameters you estimate in each case and then include the 1 in the appropriate place for whichever formula you use (and that number of parameters is NOT always the same even if you test for the same distribution testing a Poisson(10) is not the same as testing a Poisson with unspecified $\lambda$). Which is to say, when you look properly, everyone agrees, since their definitions of $m$ differ by 1 in just the right way that they both give the same result. When a comparison is made between one sample and another, as in table 8.1, a simple rule is that the degrees of freedom equal (number of columns minus one) x (. The ones that specify $k-m-1$ define $m$ in a way that doesn't include the total count.

Now if you look at their examples, the total count is included in $m$ quite explicitly (there's an example on the very same page they define their $m$ on). The difference from what you said that they say is critical, since the total count is something you calculate from the data. Miller and Freund actually specify that their $m$ is "the number of quantities obtained from the observed data that are needed to calculate the expected frequencies" (8th ed, p296).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed